Cvent

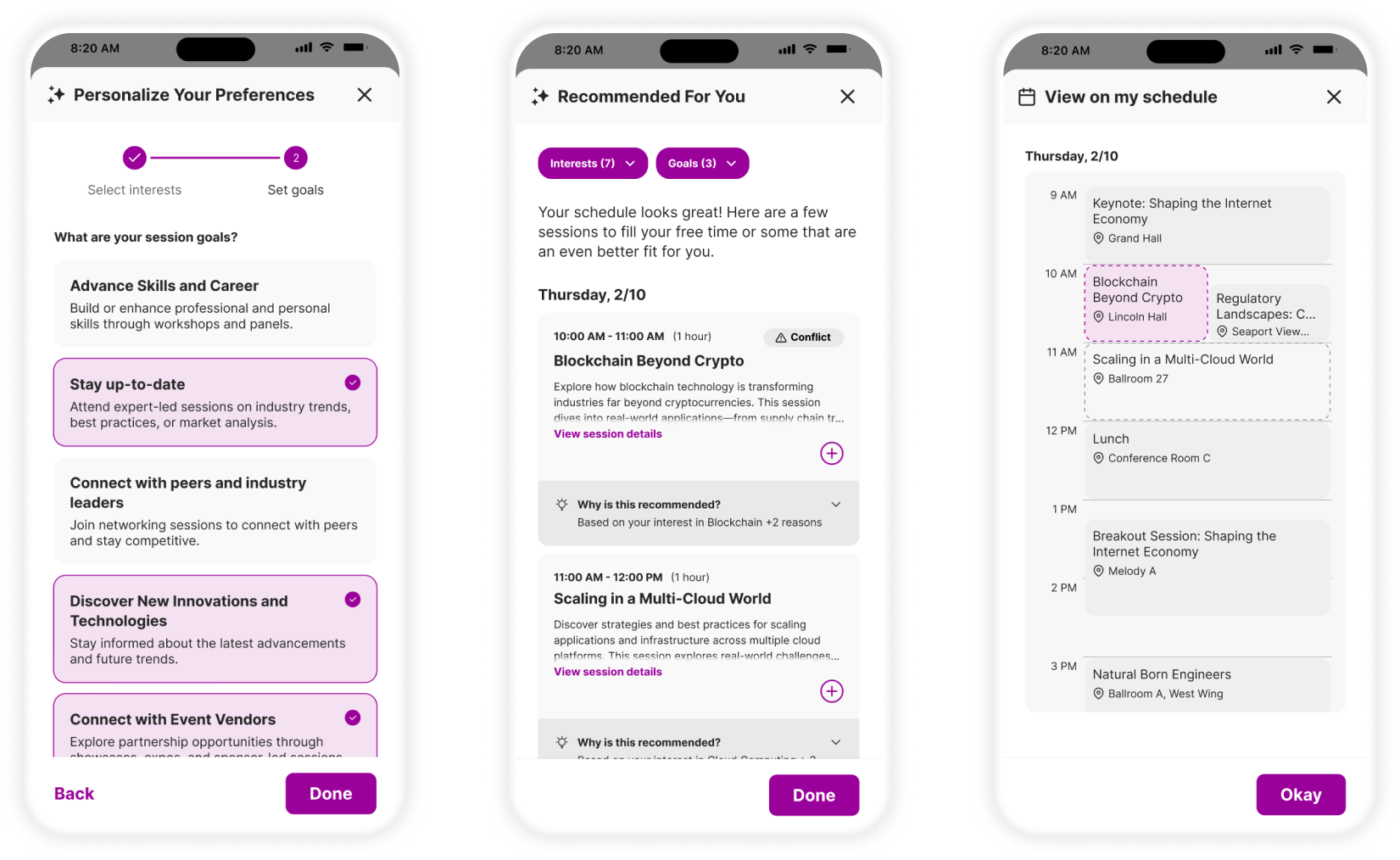

An AI-powered session recommendation system designed to help attendees at large-scale events cut through choice overload and build a personalized, conflict-aware agenda, without the cognitive paralysis.

3

Productive workshops facilitated

2

Rounds of usability testing before dev handoff

100%

User testing participants found design intuitive by round 2

My role

End-to-end ownership, from design strategy and research to dev handoff

Tools

Figma · FigJam · Figma Make · MixPanel · Sigma

Duration

8 months

Team

Senior Product Designer (me) · UX Research · Content Design · Localization · 2 PMs · Engineering

Cvent's Attendee Hub is an all-in-one digital event platform that powers virtual, hybrid, and in-person experiences. It centralizes session browsing and scheduling, networking and engagement, content delivery as well as gamification. Event planners control when the platform becomes available to attendees, which generally occurs after attendees register for an event and some time before the event day.

At large, multi-day events like conferences, attendees routinely faced a flat, unfiltered list of 50+ sessions with no personalization. The friction was two-fold:

Sheer volume

A flat list of 50+ sessions forced attendees to manually scan every option for each day, creating choice overload with no clear path to prioritization.

Lack of relevance

No clear indication of why a session might be worth attending forced attendees to read every description individually, which was time-consuming, frustrating, and error-prone.

The downstream effect was predictable: attendees missed valuable sessions, picked ones that weren't a good fit, and left events feeling underwhelmed.

How might we help attendees build their event agenda so they get the most value out of the sessions and prevent cognitive overload and decision paralysis?

The business case was clear: Better session engagement leads to higher attendee satisfaction, stronger ROI for planners, and more compelling events for returning exhibitors and speakers.

The project moved through five distinct phases. Each one built directly on the last - the audit informed the workshops, the workshops shaped ideation, ideation constrained the design, and two rounds of testing refined it.

Research & Audit

Existing research · data analysis · internal audit for existing patterns

Problem Discovery

Define problem · constraints · users · assumptions · cross-functional workshop

Ideation & Scoping

JTBD journey map · ideation workshops · OST · scope decisions

Design & Iteration

Wireframes → hi-fi · multiple iterations · feasibility checks · stakeholder reviews

Usability Testing ×2

Round 1 → received feedback · improved designs · Round 2 → validated · dev handoff prep

Phase 01

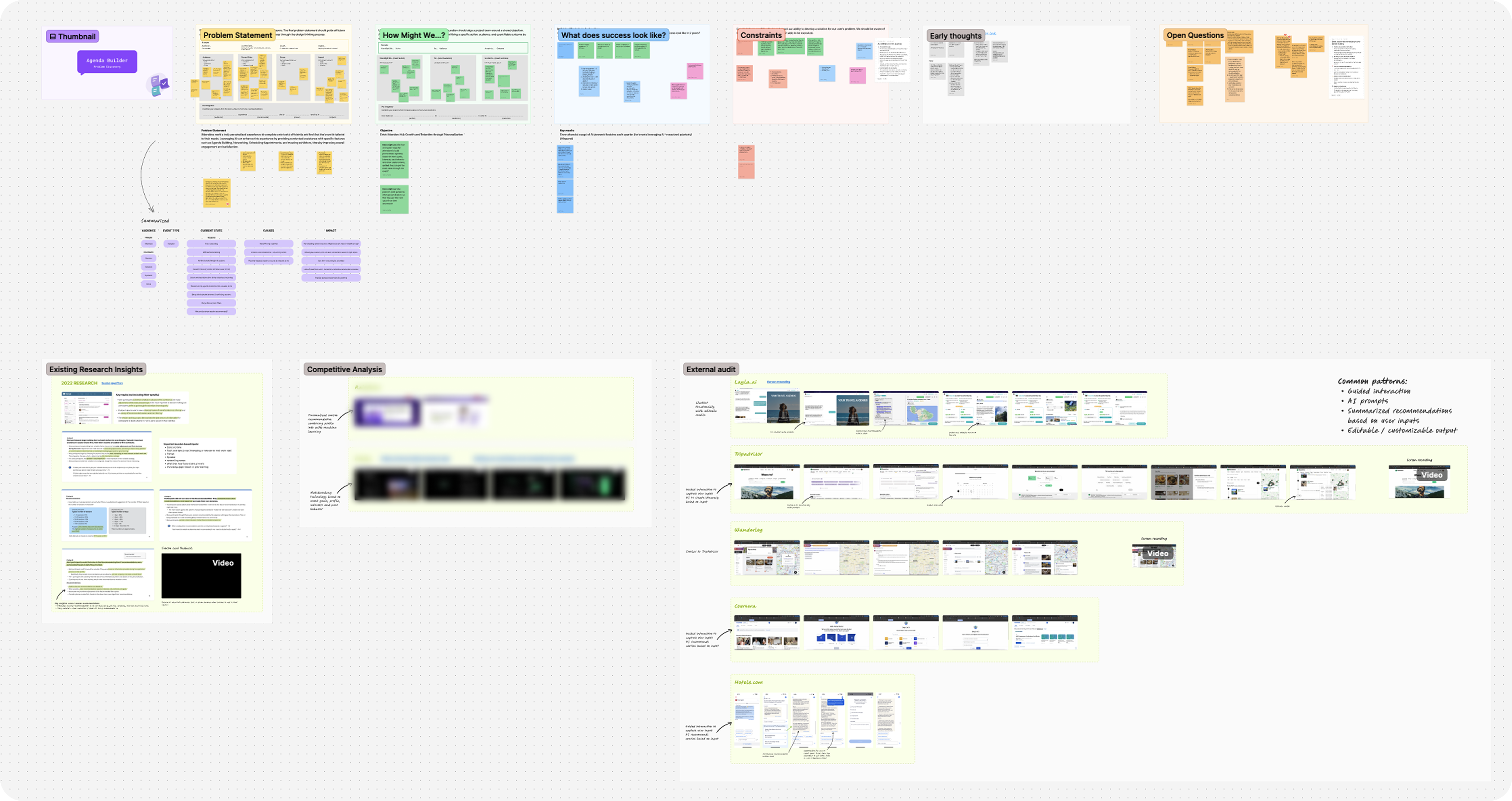

The project began with a clear OKR: simplify agenda building for event attendees. Before running any workshops or drawing any wireframes, I invested time in building a deep, evidence-based understanding of the problem space. This meant going through all existing research insights related to agenda building, analyzing user behavior data in MixPanel and Sigma, and conducting a thorough internal audit of the current agenda-building experience across all products.

This phase was less visible but foundational. Without it, the workshops that followed would have been speculative. With it, I could walk into the room with validated pain points, concrete data, and a clear picture of what was already known, and what wasn't.

Phase 02

Armed with that research, I planned and facilitated a problem discovery workshop with product, research, and design partners. The goal wasn't to brainstorm solutions; it was to collectively agree on the problem. Together, we defined the problem statement, surfaced the constraints we'd be designing within, identified who the users were and what we already knew about them, listed the open questions we'd need to answer, and made our assumptions explicit so we could track and test them.

The output was alignment - a shared, documented problem statement that the whole cross-functional team could point back to throughout the project. This step made every subsequent decision faster and less contentious, because we had already agreed on what we were solving for.

Phase 03

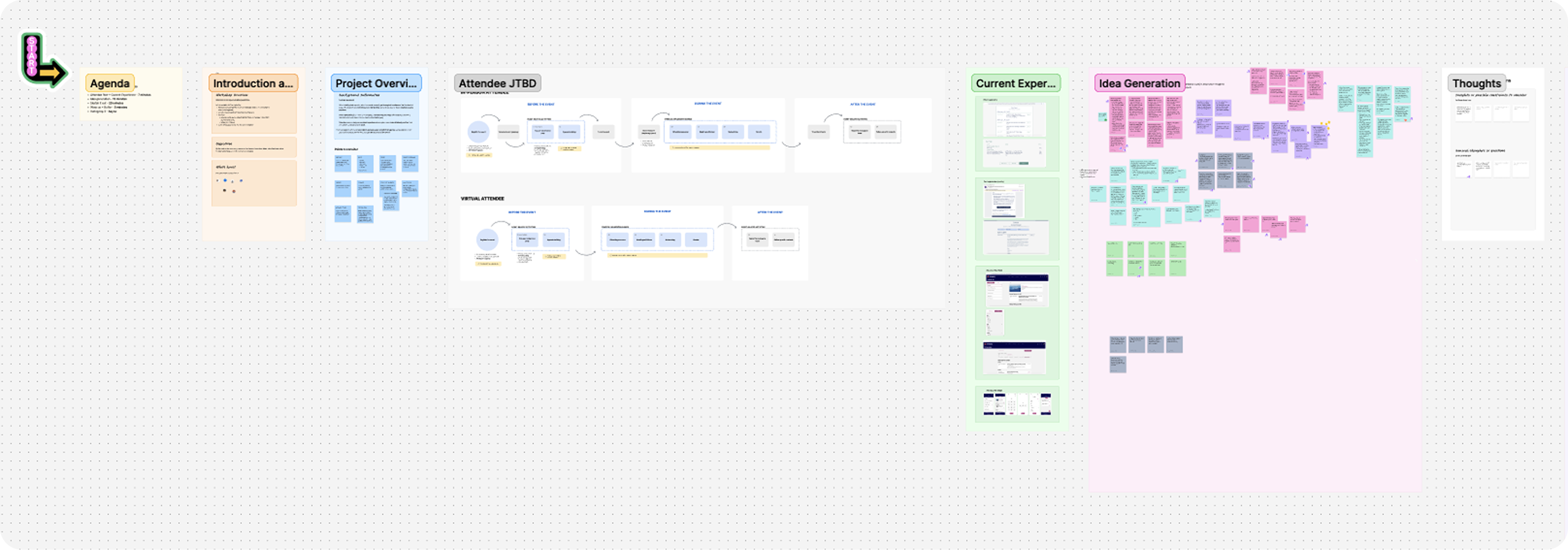

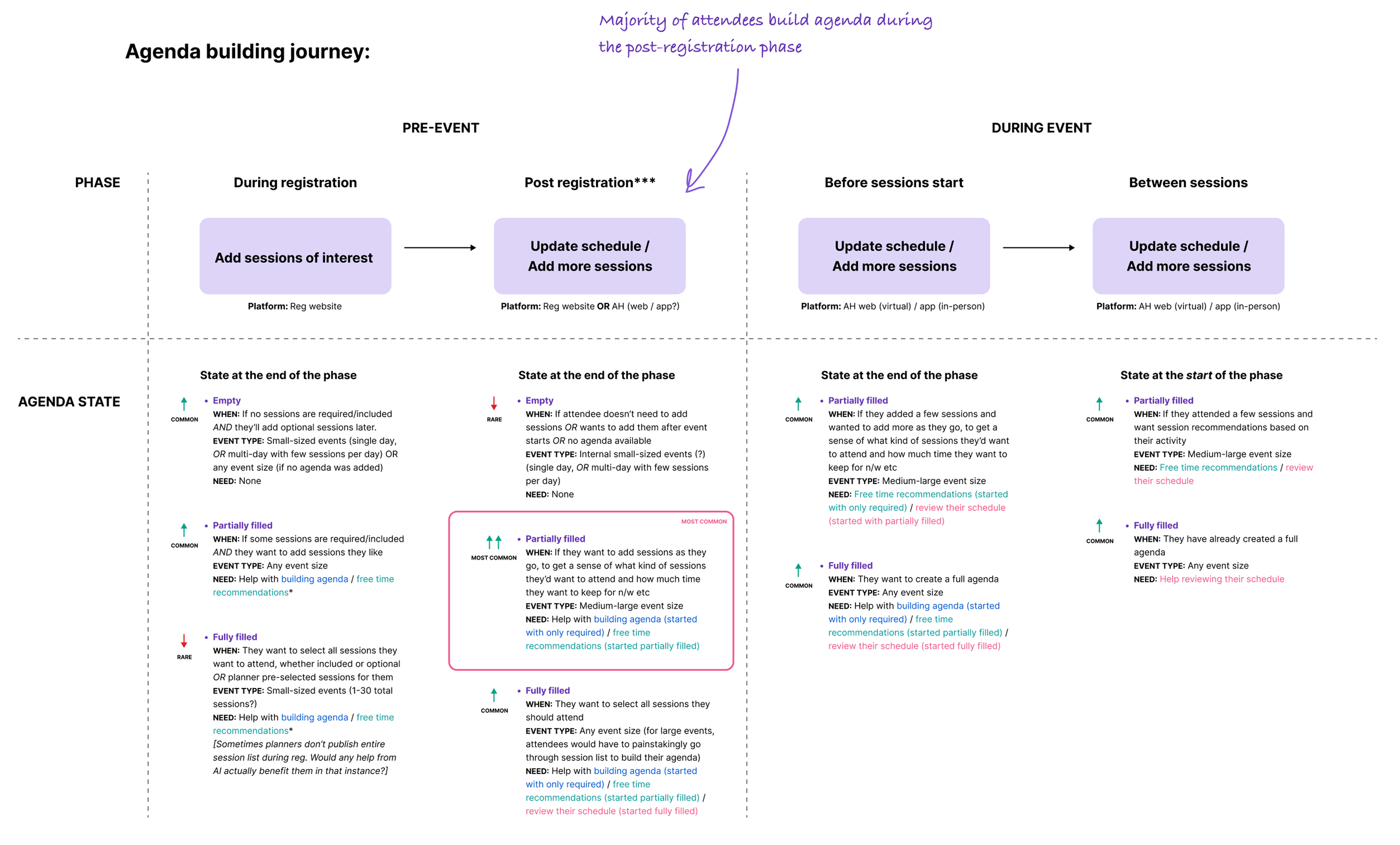

With a clear problem statement in hand, I moved into ideation. Using existing Jobs-to-be-Done research data, I created a detailed attendee journey map that deliberately extended well beyond the boundaries of Attendee Hub. The full journey I mapped included the pre-event registration experience within a separate platform where they could sign up for initial sessions, and then the in-event experience inside the Attendee Hub platform post-registration.

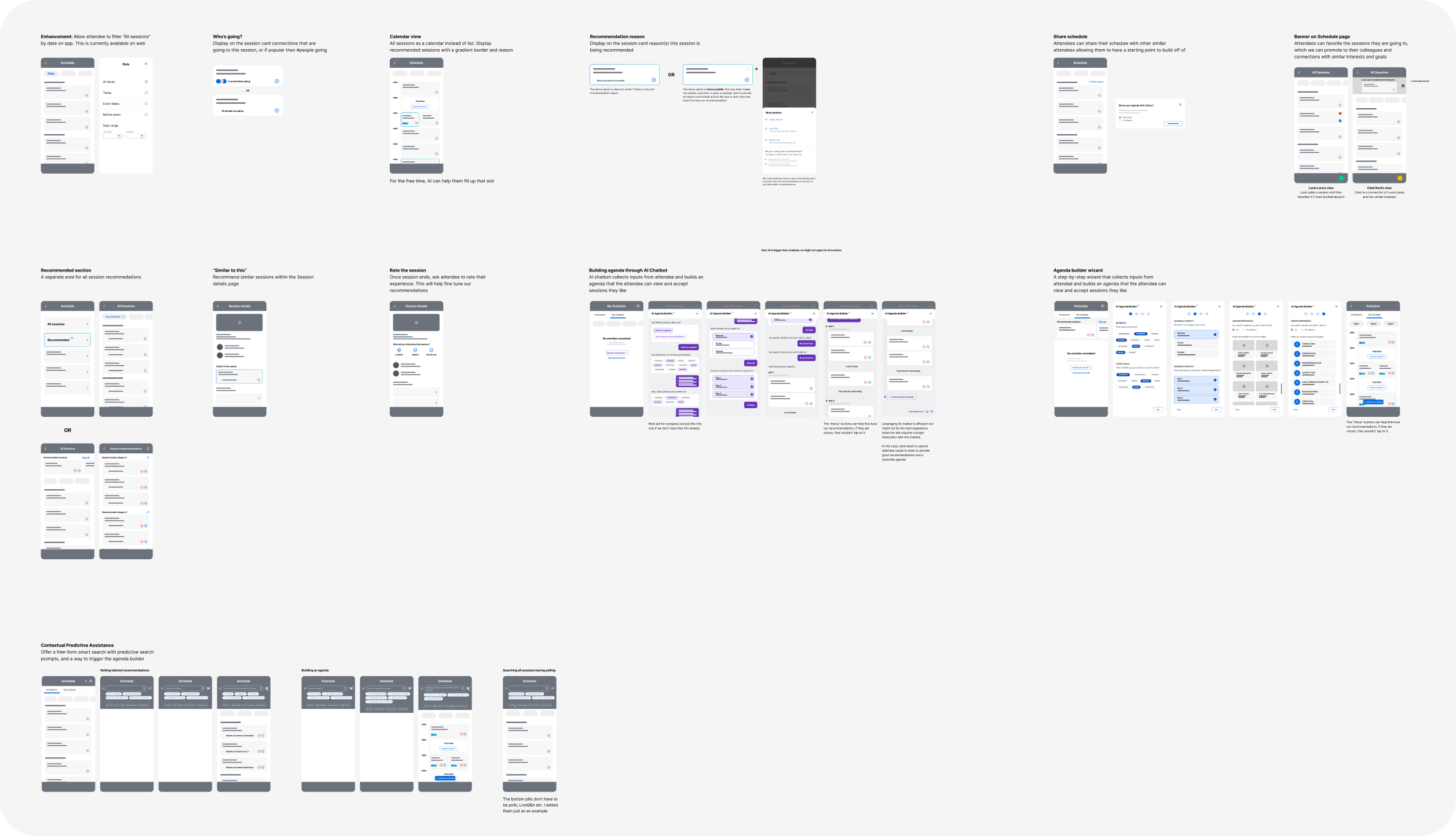

I facilitated a design ideation workshop with the design and research teams, using the journey map as a shared reference. Grounding participants in the attendee's full lifecycle, not just their in-app experience, led to a much richer set of ideas. The session generated concepts that spanned Attendee Hub and beyond, including suggestions for improving touchpoints in the registration flow. After the workshop, I wireframed the strongest concepts and presented them to design leadership and stakeholders, receiving positive and directional feedback.

But this created a new challenge - we had too many good ideas and no clear scope. To resolve this, I organized and facilitated an Opportunity Solution Tree (OST) workshop with my product partners and Design Manager. We stepped back to the OKR, mapped out the various opportunities around it, listed the solutions each opportunity could yield, and surfaced the assumptions behind each.

This exercise narrowed the project to its final scope - an AI-powered recommendation flow that personalizes session suggestions based on attendee interests, goals, and behavioral data. The OST workshop was arguably the most strategically important step of the project. It happened before a single high-fidelity screen was drawn, and it set the direction for everything that followed.

Phase 04

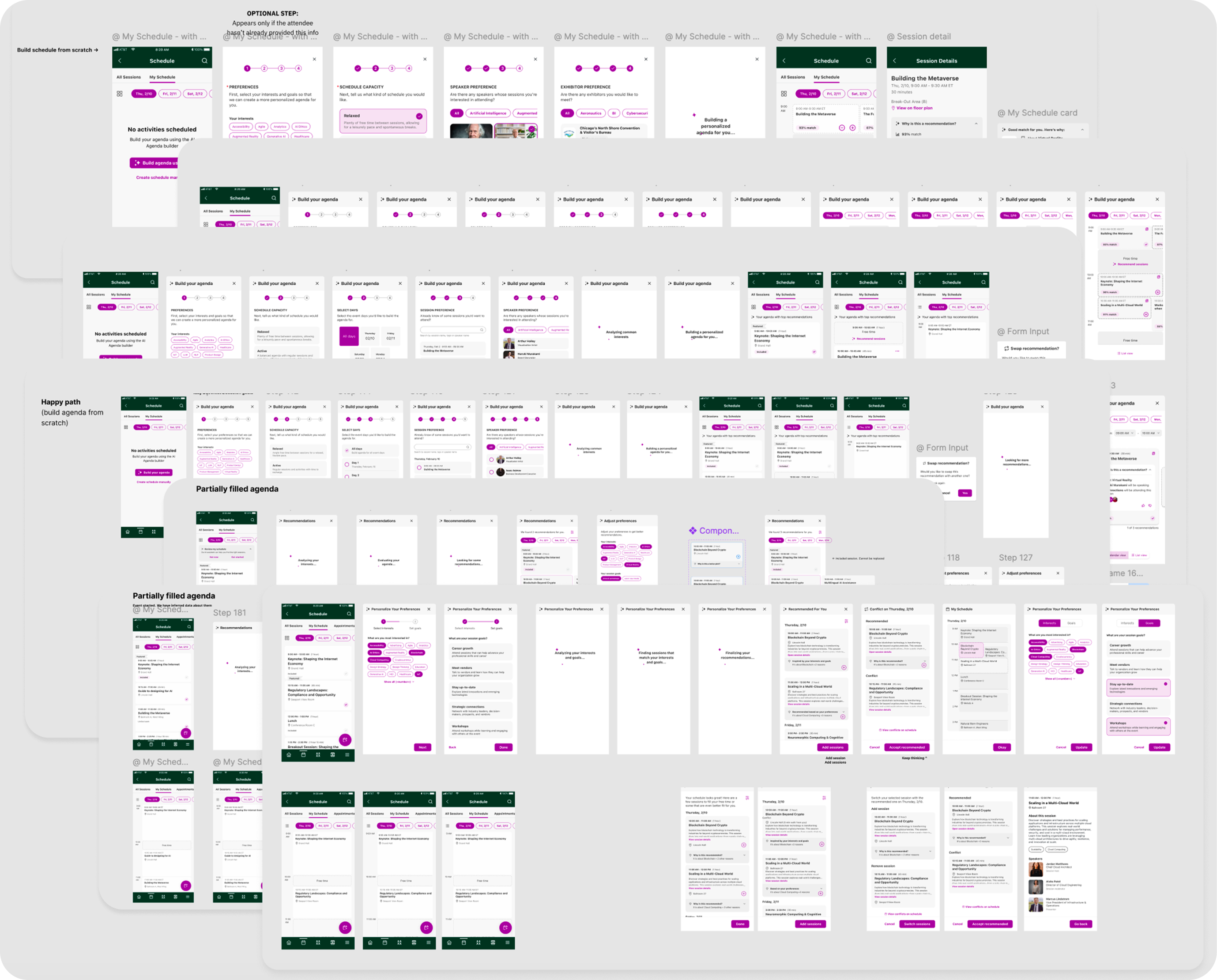

With clear direction, I began designing the solution. During the initial flow, we hypothesized that:

The designs went through multiple rounds of iteration; reviewing with engineering to check feasibility, presenting to stakeholders for feedback, and refining until we felt confident enough to usability test the designs.

Phase 05

Working with our user researcher, we ran the first round of usability testing. It disproved both hypotheses.

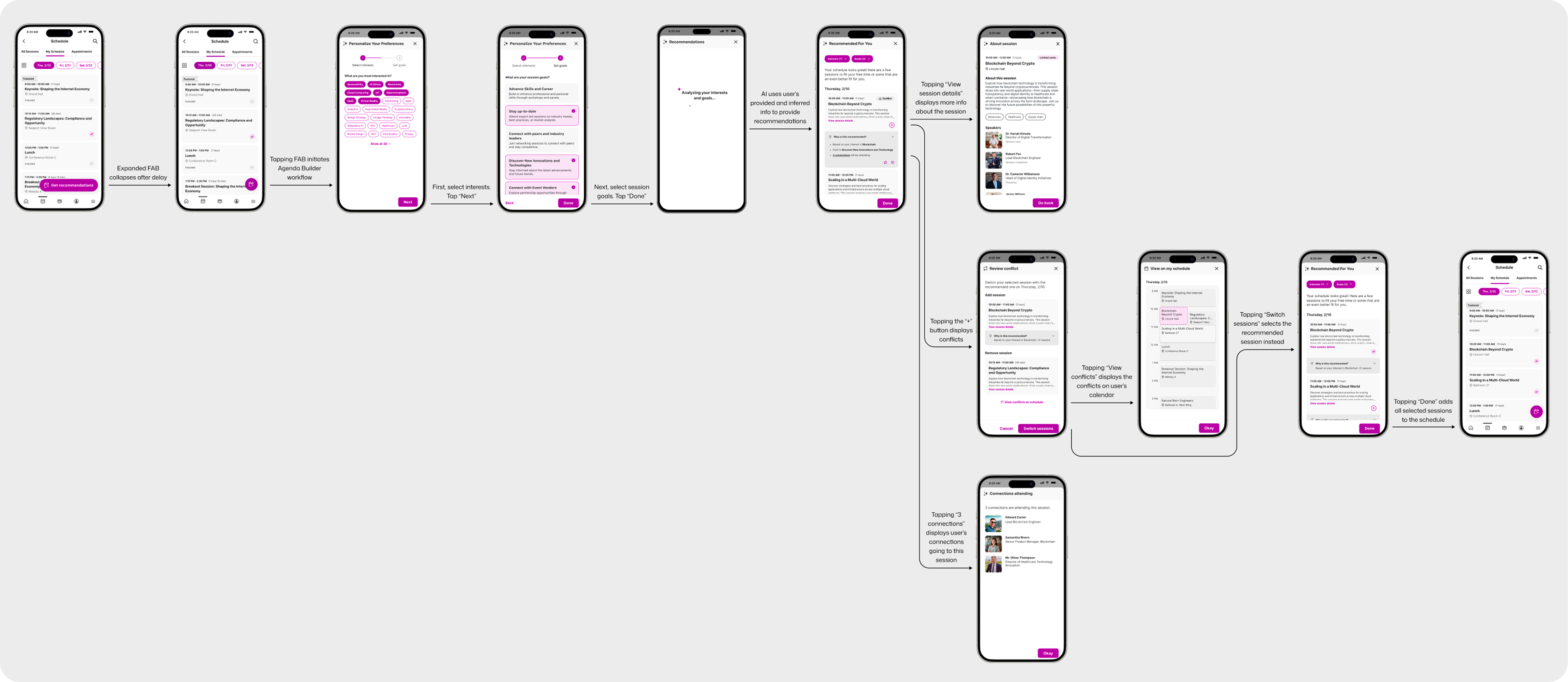

Missed entry point

The AI sparkle icon on the floating action button was too small and unrecognized. Users missed the feature entirely on first viewing.

Fix: The FAB label now expands with readable text when the user lands on the schedule page, then collapses after a short delay, making the feature unmissable on first visit without permanently cluttering the UI.

Unclear content

The language in the goals selection step reduced user confidence and completion rates.

Fix: The copy was rewritten to be more direct and outcome-oriented, with clearer examples of what selecting each goal would mean for their recommendations.

Missed conflicts

Users expected an explicit warning when a recommended session conflicted with one already on their schedule. Without one, the majority didn't notice.

Fix: A high-visibility "Conflict" indicator was added to recommendation cards, plus a calendar view for reviewing and resolving overlaps before committing to a session switch.

After incorporating all changes, we ran a second round of usability testing. All participants described the updated flow as clear, intuitive, and easy to use. Conflict identification improved from 40% in round 1 to 100% in round 2.

The final design is a three-step workflow where each step builds on the last, from empty or partially built schedule to personalized, conflict-aware agenda.

Social Proof - Connections Attending

Additionally, following the insights from the usability testing, a hyperlinked connections count was

added to the

recommendation card, bringing a genuine layer of social context into the decision.

Measurable usability improvement

Conflict identification went from 40% in round 1 to 100% in the final round of testing. All participants described the flow as clear, intuitive, and easy to use.

What I learned

The most valuable design work on this project happened before any screen was designed. Running the problem discovery workshop and then the OST scoping session meant the team was aligned, the scope was defensible, and the solution we built was solving the right problem.